The Enterprise AI Workshop Playbook: How to Surface, Score, and Prioritize AI Across Your Organization

Author: Ross Vorbeck

16 March, 2026

When a large U.S. manufacturer approached us to help them understand where AI could create real value across their business, we did not start with technology. We started with people. The approach we used that engagement is the same one we bring to every client, across industries, from financial services and healthcare to distribution, professional services, and beyond.

Over the course of five months, our team facilitated structured AI discovery workshops with ten departments at that client, from Operations, Finance, HR, Engineering, and IT. The process generated 180 distinct AI concepts, a ranked roadmap for every department, and a clear enterprise AI strategy. The methodology behind it is repeatable and industry-agnostic. Here is how it works, and what any organization should know before starting this journey.

The Challenge: AI Opportunity Is Everywhere, But Clarity Is Not

Most organizations know AI can help them. The harder question is: where, specifically, and in what order?

Without a structured process, AI conversations tend to either stay too high level or get derailed by IT constraints before business value is even defined. Teams end up chasing tools rather than solving problems.

What every client needs is a methodology that surfaces real operational pain, scores ideas consistently across every department, and produces a roadmap that leadership can actually execute. That is exactly what the workshop program was designed to deliver.

The Workshop Structure: Consistent, Collaborative, and Repeatable

Each workshop ran approximately three hours and followed the same format across all ten departments. Consistency was deliberate: it meant results could be compared and rolled up into a single enterprise view.

Independent Brainstorming

Participants brainstormed independently using assigned color-coded sticky notes in Miro before sharing with the group. This approach intentionally prevents groupthink. Every voice contributes ideas without being anchored to what someone else said first.

Clustering and Swim Lanes

Each participant presented their top idea to the group. Similar concepts were grouped into broader opportunity areas and organized into five swim lanes by type of AI solution:

- Automation and Efficiency — workflow automation, process orchestration, and task elimination

- Augmentation and Decision Support — forecasting, predictive analytics, and AI-assisted recommendations

- Content and Creation — document generation, reporting automation, and AI-assisted communications

- Interaction and Interface — chatbots, search assistants, and conversational AI tools

- Miscellaneous — use cases that span categories or did not fit neatly into the above

This gave every team a visual map of where AI could apply across their function.

Live Scoring

Each idea was then scored on two independent axes:

- Value Score: voted on by department participants, the people closest to the problem. Scores reflected real operational pain and business need.

- Feasibility Score: assessed independently by the Business Technology team after value was established. BT evaluated technology maturity, data accessibility, and fit within current systems.

Priority Score = Value + Feasibility (each 1-10, maximum combined score of 20). Higher scores fed directly into the roadmap. Lower scores were backlogged for future phases. The result was a ranked priority list based entirely on merit, not politics or gut feel.

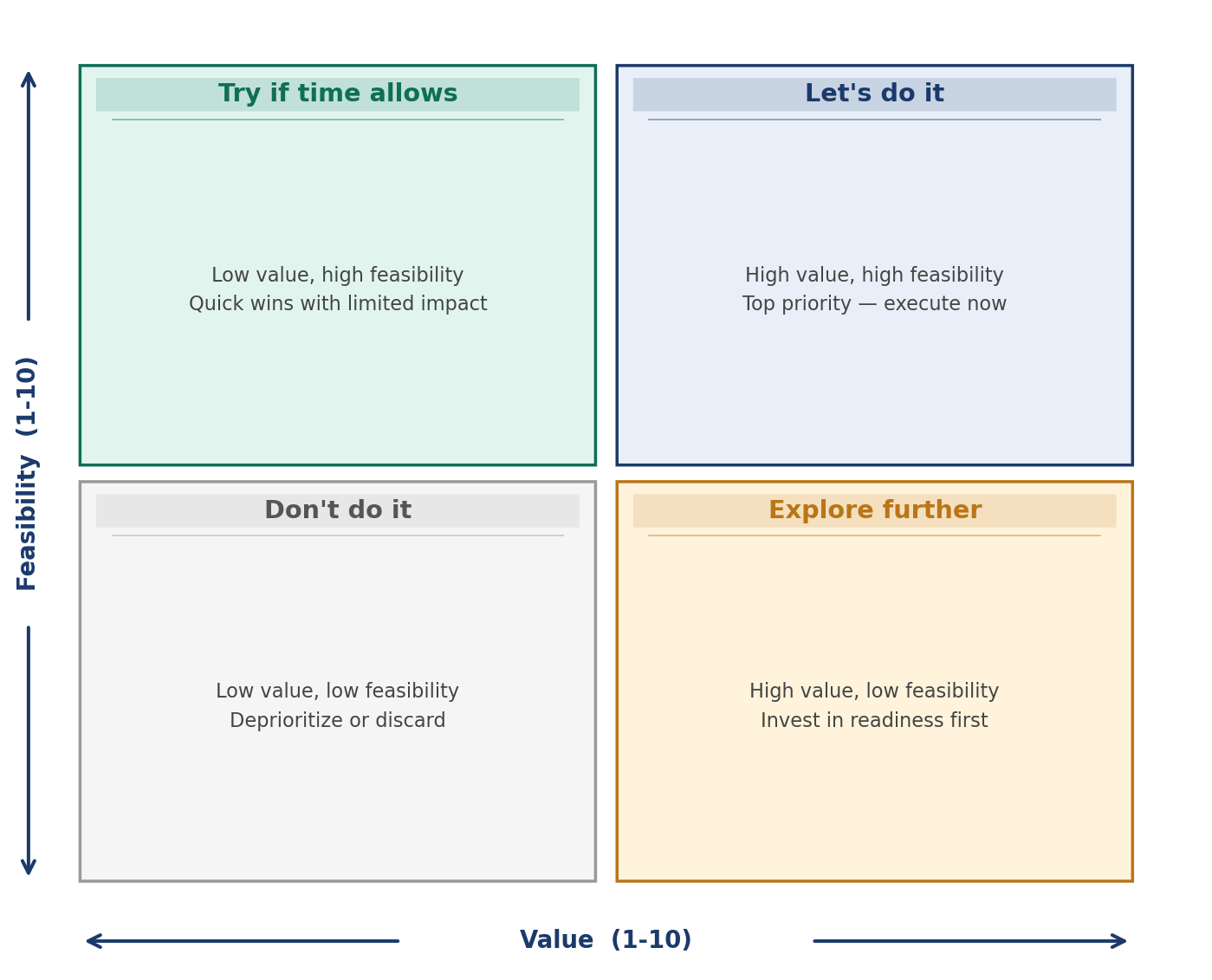

Scoring and Prioritization: The Value/Feasibility Matrix

Every idea was plotted against two axes to determine where it landed in the priority stack. The matrix below illustrates how opportunities were categorized and actioned:

The top-right quadrant, high value and high feasibility, became the near-term roadmap. High-value ideas with low feasibility were flagged for additional exploration before execution. Low-value, high-feasibility ideas were treated as quick wins only if capacity allowed. Low-value, low-feasibility ideas were deprioritized entirely.

This framing gave leadership a defensible, visual rationale for every sequencing decision.

What 180 Ideas Tell You

Ten departments. 180 AI concepts. Here is what stood out.

First, every department had AI opportunity. There was no function where the team struggled to find ideas. The challenge was never generating concepts. It was structuring and prioritizing them.

Second, the highest-value ideas clustered around a handful of recurring themes:

- Document analysis and knowledge retrieval: reducing time spent searching for information across unstructured sources

- Reporting automation: eliminating manual assembly of recurring reports and dashboards

- Forecasting and predictive analytics: improving demand planning, resource allocation, and risk visibility

- Workflow automation: reducing friction in repetitive, rule-based operational processes

- Operational monitoring: real-time visibility into quality, compliance, and production health

Third, feasibility was the honest filter. Some of the most exciting ideas scored lower not because they lacked value, but because the underlying data was not ready or the technology was not yet mature enough to deliver reliably. The scoring process surfaced that reality early, before any development dollars were committed.

Buy vs. Build: The Decision That Shapes Everything

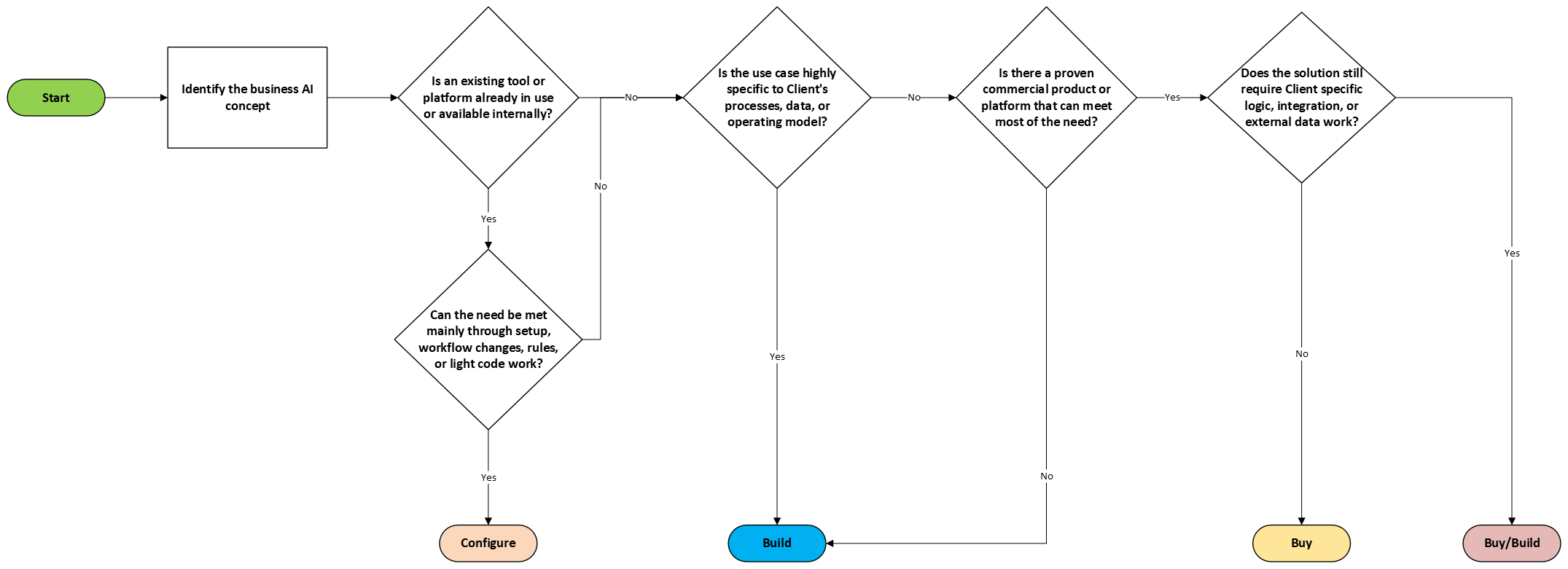

Every prioritized idea went through a buy versus build assessment. This step is critical and is often skipped or done too superficially.

Our framework evaluated four paths:

- Configure: Extend tools the organization already has, such as Microsoft Power Automate or existing platforms.

- Buy: A mature commercial product exists that addresses the need without significant customization.

- Build: The use case is specific enough to the organization’s processes, data, or operating model that custom development delivers superior long-term value.

- Hybrid (Buy/Build): A commercial platform provides the foundation, but custom logic, integrations, or data pipelines are required on top.

The workshop conversations informed this assessment directly. When participants identified existing software they already evaluated or vendors they were aware of, that input shaped the recommendation. We supplemented their knowledge with our own market research to validate whether viable buy options actually existed and could scale.

Below is the process we followed when making these buy/build decisions.

The Foundation: An Enterprise AI Agent Platform

One of the most important outcomes from this engagement was the recommendation to build an Enterprise AI Agent Platform before scaling individual use cases.

Here is the problem we kept running into: if you build each AI use case as a standalone application, you end up with fragmented tools, duplicated infrastructure, inconsistent governance, and a growing maintenance burden. Every new use case starts from scratch.

A centralized AI agent platform like Databricks solves this by providing a shared framework where AI capabilities are deployed as modular agents. Each agent connects to enterprise data, integrates into business workflows, and operates within a consistent governance and security model. New use cases can be deployed faster because the foundation already exists.

For any organization operating across multiple departments with a growing AI backlog, this architecture is not optional. It is what makes sustainable, enterprise-scale AI adoption possible.

Sequencing the Roadmap: Foundation First, Then Scale

A common mistake in AI programs is trying to execute everything at once. Departments get excited, leadership wants results, and teams end up overloaded without enough delivered to show for it.

Our roadmap approach sequenced work deliberately:

- Stand up the enterprise AI platform and foundational initiatives first. These create the architecture, governance patterns, and reusable services that every department use case will depend on.

- Execute no more than two concepts per department at a time, depending on complexity, data readiness, and available business capacity.

- Reuse components, integration patterns, and lessons learned from earlier implementations to accelerate later ones.

Build effort was estimated directionally across four tiers: from one to three months for simple automations and dashboards up to nine to twelve months for complex predictive models, large-scale data unification, and production-grade optimization requiring heavy change-management. These estimates assumed a delivery team of two developers and one QA resource, based on comparable implementations rather than detailed technical design.

The example roadmap below illustrates how this sequencing looks across multiple departments over an 18-month horizon:

Foundation and platform initiatives run first (shown in navy). Departmental use cases follow in phased waves, color-coded by delivery approach: Build, Configure, Buy, and Buy/Build. This structure avoids resource overload, enables reuse, and gives leadership a clear picture of when value will be delivered.

Key Lessons for Manufacturers Starting This Journey

After five months and 180 ideas across ten departments with our manufacturing client here is what we would tell any organization considering a structured AI program:

- Start with people, not technology. The best AI ideas come from the employees doing the work every day. A structured workshop process draws that knowledge out in a way that ad hoc conversations never will.

- Separate value from feasibility, and let different groups score each. Department participants understand business impact. IT and business technology teams understand what is technically achievable. Combining both views produces a realistic, defensible priority list.

- Be honest about data readiness. AI is only as good as the data behind it. Many high-value ideas will require data cleanup, integration work, or new pipelines before they can be realized. Surfacing that early saves months of frustration downstream.

- Build the foundation before you scale. An enterprise AI agent platform is an investment that pays back with every additional use case you deploy. Do not skip it to chase short-term wins.

- Pace the roadmap. Up to two active projects per department is a meaningful and manageable pace for most organizations. Trying to do more typically stretches teams too thin and dilutes results.

Ready to Map Your AI Opportunity?

Whether you are a manufacturer, a financial services firm, a healthcare organization, or a distribution company, the path to AI at scale starts with the same question: where does AI create the most value for your people and your operations? Xorbix can help you answer it. Our workshop methodology is designed to work at any scale, from a single department to an enterprise-wide engagement, and produces deliverables your leadership team can act on.